Cutting Big Data Down to Size

Editor's View

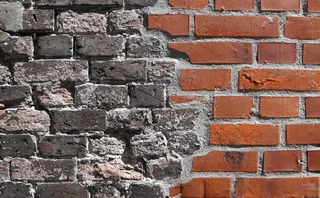

Last week, I wrote about a choice that could need to be made between cloud resources and the Hadoop tool for managing and working with big data. A better question, or a better way to frame the debate, could be as a decision about what is the best way to make use of cloud computing for data management, especially for "big data."

Tim Vogel, a veteran of data management projects for several major investment firms and service providers on Wall Street, has focused views on this subject. He advises that the cloud is best used for the most immediate real-time data and analytics. As an example, Vogel says the cloud would be an appropriate resource if one was concerned with the most recent five minutes of data under volume-weighted average pricing (VWAP).

"The cloud isn't cheap," he says. "Its best use is not for data on a security that hasn't traded in two weeks. Unless the objective is to cover the complete global universe, like [agency broker and trading technology provider] ITG does." Vogel points to intra-day pricing and intra-day analytics as tasks that could be enhanced, accelerated or otherwise improved upon through use of cloud computing resources. Data managers should think of securities data in two layers—a descriptive or identification layer and a pricing layer—both of which have to be processed and filtered to generate usable data that goes into cloud resources.

The active universe of securities as a whole, which includes fundamental data and analytics on securities, is really a super-set of what firms are trying to handle in terms of data on a daily basis, observes Vogel. With that in mind, the task for applying cloud computing to big data could actually be making big data smaller, or breaking it down into parts—cutting it down to size. That certainly will cut down on the bandwidth needed to send and retrieve data to and from the cloud, and consistently reconcile local data and cloud-stored data.

If nothing else, this is certainly a different way of looking at handling big data. It is worth considering whether going against the conventional or prevailing wisdom could lead data managers to a better way. Inside Reference Data would like to know what you think about this. We've reactivated our LinkedIn discussion group, where you can keep up with new stories being posted online, live tweets covering conference discussions, and provide feedback to questions and opinion pieces from IRD.

Only users who have a paid subscription or are part of a corporate subscription are able to print or copy content.

To access these options, along with all other subscription benefits, please contact info@waterstechnology.com or view our subscription options here: http://subscriptions.waterstechnology.com/subscribe

You are currently unable to print this content. Please contact info@waterstechnology.com to find out more.

You are currently unable to copy this content. Please contact info@waterstechnology.com to find out more.

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@waterstechnology.com

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@waterstechnology.com

More on Data Management

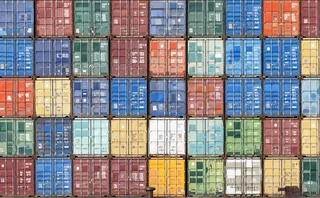

Navigating the tariffs data minefield

The IMD Wrap: In an era of volatility and uncertainty, what datasets can investors employ to understand how potential tariffs could impact them, their suppliers, and their portfolios?

Project Condor: Inside the data exercise expanding Man Group’s universe

Voice of the CTO: The investment management firm is strategically restructuring its data and trading architecture.

Tariffs, data spikes, and having a ‘reasonable level of paranoia’

History doesn’t repeat itself, but it rhymes. Covid brought a “new normal” and a multitude of lessons that markets—and people—are still learning. New tariffs and global economic uncertainty mean it’s time to apply them, ready or not.

HSBC’s former global head of market data to grow Expand Research consulting arm

The business will look to help pull together the company’s existing data optimization offerings.

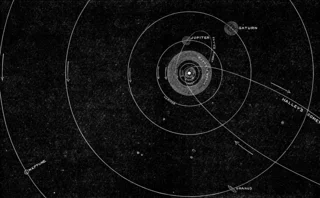

Stocks are sinking again. Are traders better prepared this time?

The IMD Wrap: The economic indicators aren’t good. But almost two decades after the credit crunch and financial crisis, the data and tools that will allow us to spot potential catastrophes are more accurate and widely available.

In data expansion plans, TMX Datalinx eyes AI for private data

After buying Wall Street Horizon in 2022, the Canadian exchange group’s data arm is looking to apply a similar playbook to other niche data areas, starting with private assets.

Saugata Saha pilots S&P’s way through data interoperability, AI

Saha, who was named president of S&P Global Market Intelligence last year, details how the company is looking at enterprise data and the success of its early investments in AI.

Data partnerships, outsourced trading, developer wins, Studio Ghibli, and more

The Waters Cooler: CME and Google Cloud reach second base, Visible Alpha settles in at S&P, and another overnight trading venue is approved in this week’s news round-up.